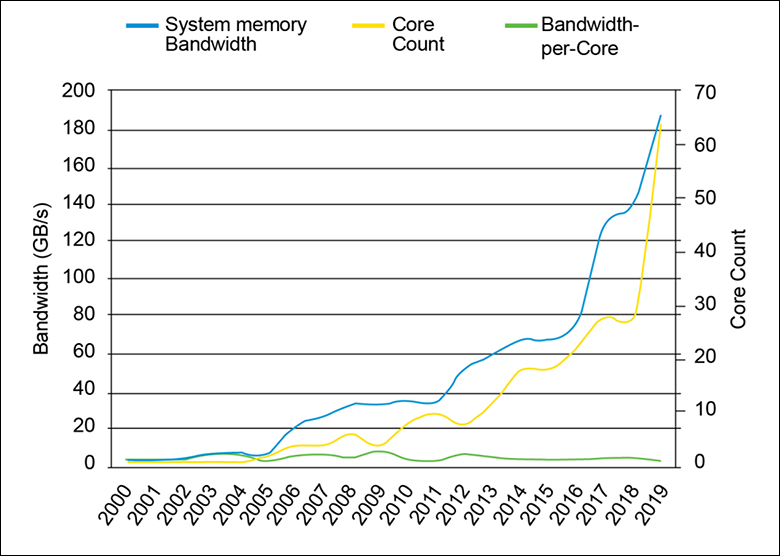

Why do such changes matter? It matters because it means the memory bus can not only move noticeably more data per clock cycle, but it can also be much more efficient at what it is moving per cycle. Which in turn means each CPU core is waiting less time for the off-chip cache data it requested. This ‘less filler, more killer’ approach to data transmission would not mean much of we were dealing with 1, 2 or even 4 cores. Those days are also long in the past. Thanks to the ongoing ‘core wars’ consumer CPU core counts have ballooned while memory bandwidth (and capacity) per core has not. Thus, in the future, efficiency per cycle will arguably be just as, if not more, important as sheer speed. That is why there are a bunch of other tweaks baked into DDR5 and we are just hitting the highlights. So much so DDR5 is a (relatively) radical departure from previous JEDEC standards. A standards body known for their ultra-conservatism who do not make major changes unless they are forced to.

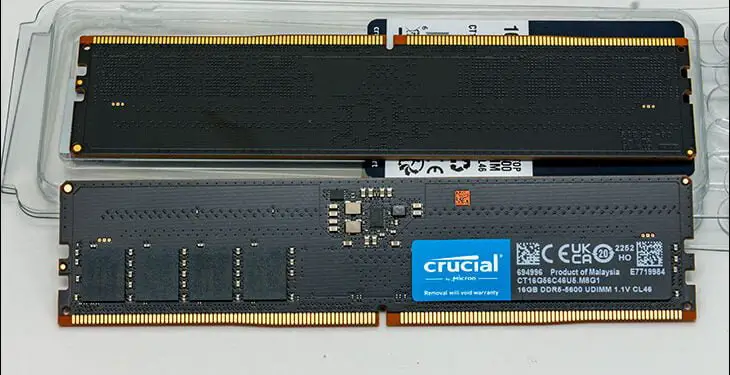

These low-level improvements are also why DDR5 officially starts at 3200 MT/s… but in reality DDR5-4800 is the starting point (aka 2400Mhz aaka 4800MT/s aaaka 38.4GB/s aaaaka PC5 38400). Speed then officially goes to 6400 MT/s (aka DDR5-6400 aaka 3200MHz aaaka 51GB/s aaaaka PC5 51000… that is too many alternative ways of saying the same thing). That is right now. DDR5-8400 is ticking along at a whopping 4200MHz is certainly viable and assuming DDR5 has the ‘legs’ that DDR4 has/had… even higher frequencies are possible, maybe even likely.

In either case, to put those numbers in perspective DDR4 clock frequencies are typically 1066.67 (DDR4-2133) to 1600Mhz (DDR4-3200)… with some extreme examples above that like 2000MHz (DDR4-4000) and even DDR4-5000 (2500MHz). Which are indeed real and (technically) viable options… if you A) have the money (and the upper end of DDR4 makes similarly clocked DDR5 look cheap in comparison) and B) have a golden CPU with a golden IMC capable of handling those insane (for DDR4) clock rates.

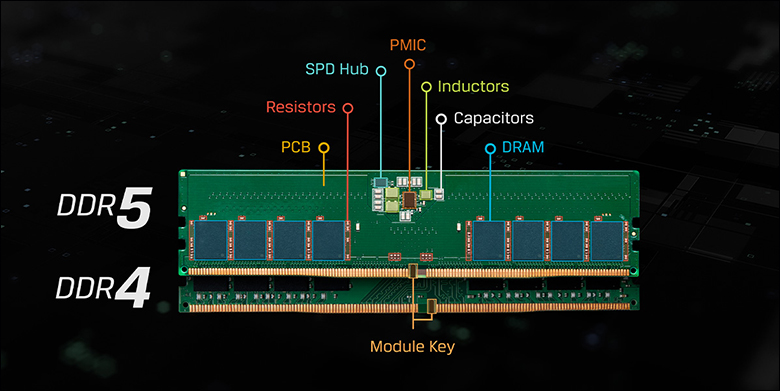

On its own speed and efficiency is all well and fine but it is when you look at ECC (error correction code) that you see a(nother) massive sea-change in the very approach to how data is stored and secured. DDR4 offered channel (aka sideband) level ECC. It was optional and not standard. It did this by widening the bus from 64-bits to 72bits with the extra 8 being used for ECC. It required a memory controller capable of handling ECC (few consumer grade CPUs could), BIOS support, and special DDR4 memory that could support it. Put another way DDR4 ECC was expensive. Expensive and rare. Expensive and rare enough it was mainly sold/used in enterprise grade hardware.

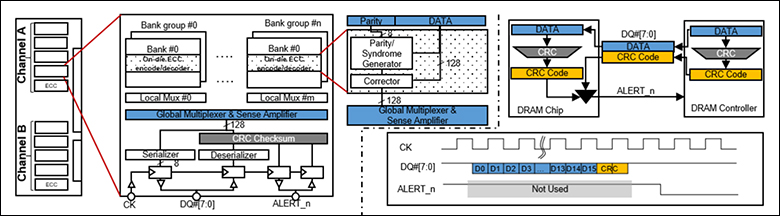

DDR5 changes all that. With DDR5 every single DIMM comes with on-die ECC. As the name suggest on-die ECC is ECC baked right into each DIMM’s IC with Error Code Correction being created and then stored right along with the data being ‘written’. In (extremely) simplistic terms JEDEC (Joint Electron Device Engineering Council) took a page from modern Solid-State Drives and added 8 bits of ECC to every 128bits of data written. To imagine this, think of a group of Hard Disk Drives in a RAID array. Each HDD writes the bits n bytes to its platters… and then adds in a parity stripe to secure that data and ensure data integrity even if a drive fails. If during a read operation the data does not match the ECC parity stripe, the data gets fixed to keep errors from reaching the end user. That parity stripe is basically the same idea as the in-die level ECC DDR5 is using.

This analogy however is a gross over-simplification.

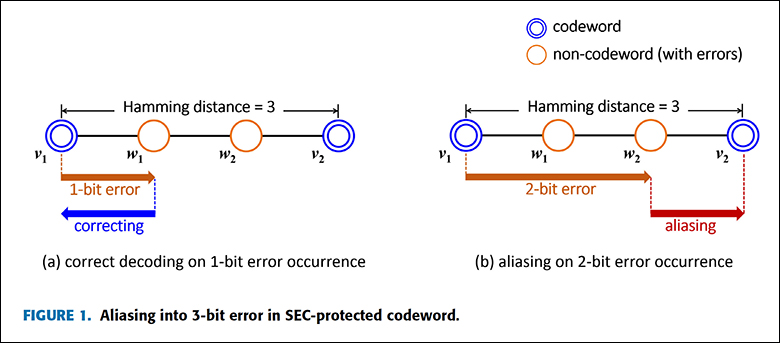

DDR5’s ECC is Single-bit Error Correction (SEC) with (technically) dual-bit error detection ECC (via an extra parity bit). Yes, Samsung used it in the past. Yes, it is better than nothing. It however is certainly not true SEC-DED (Single bit Error Correction with Double bit Error Detection) let alone legit SECDEC (single and double bit error correction).

We say this for many reasons but the big one is that, unlike in our RAID analogy, when an error is detected the ECC does not fix the data in the RAM cell array right away. Instead, it just sends the corrected data down the pipe without also issuing a re-write request on the 128bits worth of erroneous data. It does not automagically correct errors because DDR5 includes an ECS mode (“error check and scrub”) that occurs once every ~24 hours. Thus, leaving a known bad bit in play for (up to) a full day. This extended period between when data is known to be bad and when it is fixed dramatically increases the chances of a double-bit error happening.

We understand why it was done the way it was done, we just disagree with the idea that adding in an extra 135 bits worth of writes in real-time would negatively impact performance enough for anyone to notice. Thankfully even when operating with known-bad data even a single-bit error is a theoretically rare event. Making any ECC in consumer grade RAM overkill by its very nature. Overkill enough to not justify wasting capacity nor cycles on more computationally intensive ECC that can provide double-bit error correction.

Along those lines, this ECC also only fixes errors that happen ‘inside’ the ICs. It does nothing and can do nothing to protect the data integrity once it leaves the DIMM. It does not even protect data from being corrupted on the way to the DIMM. Considering RAM IC level errors (mainly due to thermal and voltage leakage related bit flips) are where most errors arguably occur this 128+8 SEC protection still is a major improvement. It just is not a silver-bullet. Nor should it be considered one. A cynical person would say it was simply added to allow memory manufactures the luxury of loosening production standards as the ECC will/should catch any real-world errors. That is a bit too cynical for us, but the reason really does not matter all that much. Some ECC is always better than none and it represents a major change in JEDEC’s priorities.

Of course, while the above ECC baked into the DDR5 standard is not end-to-end protection, DDR5 will also come in a “DDR5 ECC” flavor. This now secondary ‘side-band’ ECC has also been improved with each sub-channel using a full 8 bits of ECC (i.e. 32+8 transmission). So it is 72bits vs 80bits or twice the amount of ECC. The more ECC bits the more fine-grain and robust the ECC can be… the more it can ‘fix’. Furthermore, this combination of side-band ECC plus in-die ECC will provide protection from the moment the data hits the memory controller until it is stored in the RAM (and vice-versa). Just expect this OCD level of “end to end protection’ to not be cheap… as it too will require a memory controller capable of side-band ECC and DDR5 ECC kits and… and… and.